Floating Point Numbers

It's called floating point because the point in the number is really floating to different positions. This nothing but the scientific notations where we can float the point anywhere using exponents.

Decimals is now we write numbers in base 10. It can be both whole and fractional numbers.

Floating point is just computer's version of scientific notations.

I always assumed that the floating point numbers are simply handled as two different integers with dot separated. But this is completely wrong. Floating point values are just stored as one single value in floating point specific registers but with very different binary manipulation and standards.

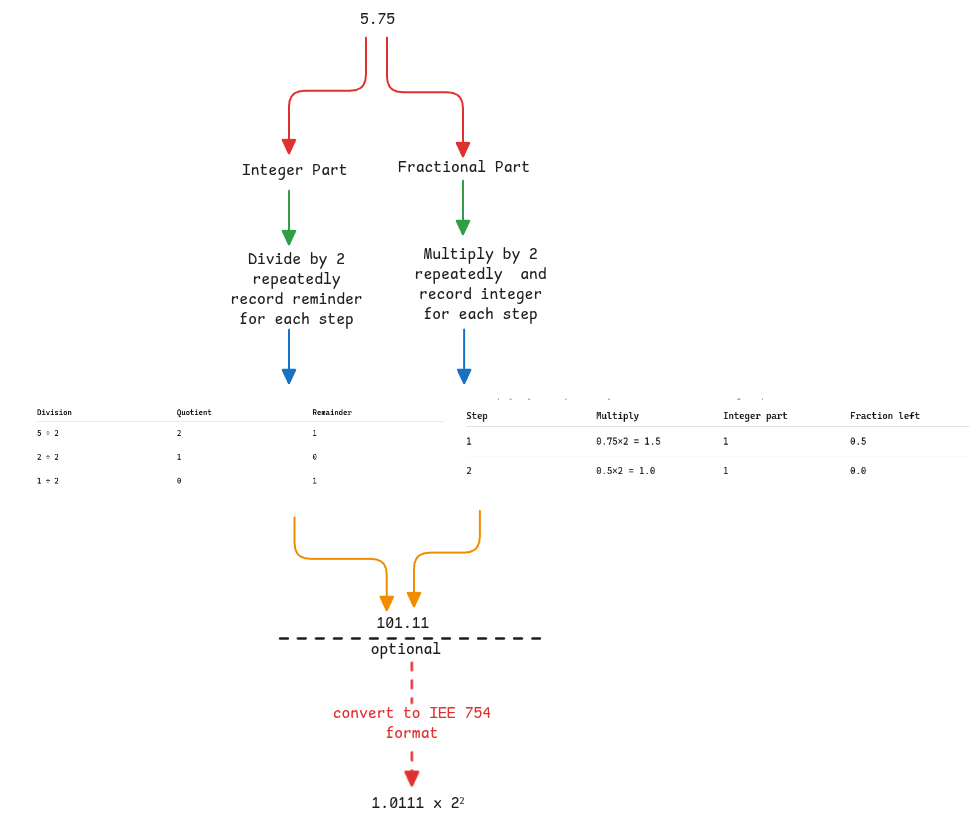

Converting floating numbers to binary

Integer and fractional parts are handled differently. They're not treated as just two integers separated by decimal point.

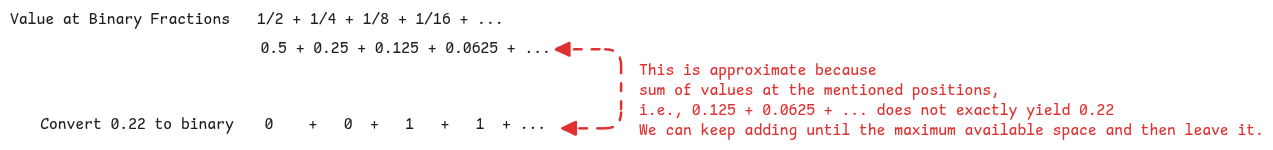

Floating points aren't accurate

The above diagram is only a mental model for understanding why it isn't accurate.

In reality, for computers to convert a decimal number to a floating representation, it does the following

- if the number is 585.22, it will convert it into regular number as -

- Then it will convert both numerator and denominator to binary.

- Perform division on these binary numbers until the quotient is 53 bits.

- Then converts the answer to the format mentioned below.

Standards for binary representation of floating numbers

All CPU architectures follow one single standard called the IEE 754 to represent the floating numbers. It uses the scientific notation and normalization to ensure the integer part of the binary is always just 1. This is the exponent of base 2 since the value is binary.

When we convert a decimal to binary, there will be 1 at some location for sure. The normalization will keep moving the decimal point to left until it reaches the first 1.

Finally what's stored is - sign bit + exponent + mantissa (binary value after the decimal point) only. Here the main assumptions are -

- The integer part is understood that it's always 1.

- The size of sign, exponent and mantissa bits are fixed.

- The bias added to the exponent is known based on the register size.

- Exponent is for base 2 since it's binary.

The exponent itself can be positive or negative depending on how decimal is moved to get just 1 before the decimal point.

Adding Bias to Exponent

In this floating point representation standard, we use the scientific notation to represent the mantissa and the exponent can then be positive or negative. But the idea is to make exponents just positive to make the comparison easier. Meaning, the size of the exponent can be directly used to determine if a number is greater or smaller.

Bias in english means, having an opinion different to truth. That's exactly what's done in IEE755. The actual value of exponent is biased with a fixed value.

For example, in case of 32 bit float, the bias value is 127. This is because 32 bit float register has 8 bits reserved to represent exponent. This means, the exponent can be to and the exponent bits must hold values to . But we will add 127 to each of them to ensure only positive numbers are stored.

Floating point in programming languages

When we instantiate floating point numbers in Java, it already converts it to IEE 758 format before storing it into memory. It's an hardware requirement that's directly fulfilled by all programming languages.

In JavaScript, all numbers are represented in IEE754 format. Meaning even for whole numbers, it has only 53 bits available.

FPU in CPU

FPU is a component in CPU that implements and handles IEE 754 standards. ALU is used only for integers and FPU is used for all floating point calculations.